- A+

环境

阿里云elasticsearch集群5.0版本

微软云elasticsearch集群5.6版本

需求

需要把阿里云elasticsearch集群新老数据迁移到微软云elasticsearch集群

解决

新数据比较好弄数据源输出到新的微软云kafka集群然后微软云logstash消费新数据到新elasticsearch集群即可,关于老数据迁移比较麻烦,但官网也给了成熟的解决方案既是快照备份与还原,下面实施过程既是对实施过程的记录

实施

阿里云elasticsearch集群操作

一,先关闭数据平衡,注意一个一个的来,关一个节点的进程none,all循环一次,否则最后集群切片变动,恢复时间很长

1、修改elasticsearch.yml配置,添加如下

path.repo: /storage/esdata

设置索引备份快照路径

注意所有的master节点与data节点都需要配置

2、关闭自动平衡

curl -XPUT http://10.10.88.86:9200/_cluster/settings -d'

{

"transient" : {

"cluster.routing.allocation.enable" : "none"

}

}'

elasticsearch日志

[root@elk-es01 storage]# tail -f es-cluster.log

[2018-05-11T15:24:15,605][INFO ][o.e.c.s.ClusterSettings ] [elk-es01] updating [cluster.routing.allocation.enable] from [ALL] to [none]

3、重启elasticseach

4、启动自动平衡

curl -XPUT http:///10.10.88.86:9200/_cluster/settings -d'

{

"transient" : {

"cluster.routing.allocation.enable" : "all"

}

}'

5、重复循环1、2、3、4操作在剩下几个节点,最好通过/_cat/health查看集群恢复100%后操作下一次,如果不关闭allocation重启elasticsearch集群恢复时间很长会从0%开始,关闭后从节点数减一除以总节点数比例开始恢复会块很多

二、设置快照基本配置

curl -XPUT http:///10.10.88.86:9200/_snapshot/client_statistics -d'

{

"type": "fs",

"settings": {

"location": "/storage/esdata",

"compress": true,

"max_snapshot_bytes_per_sec" : "50mb",

"max_restore_bytes_per_sec" : "50mb"

}

}'

注意错误

报错没有权限

store location [/storage/esdata] is not accessible on the node [{elk-es04}

{"error":{"root_cause":[{"type":"repository_verification_exception","reason":"[client_statistics] [[TgGhv7V1QGagb_PNDyXM-w, 'RemoteTransportException[[elk-es04][/10.10.88.89:9300][internal:admin/repository/verify]]; nested: RepositoryVerificationException[[client_statistics] store location [/storage/esdata] is not accessible on the node [{elk-es04}{TgGhv7V1QGagb_PNDyXM-w}{7dxKWcF3QreKMZOKhTFgeg}{/10.10.88.89}{/10.10.88.89:9300}]]; nested: AccessDeniedException[/storage/esdata/tests-_u6h8XrJQ32dYPaC7zFC_w/data-TgGhv7V1QGagb_PNDyXM-w.dat];']]"}],"type":"repository_verification_exception","reason":"[client_statistics] [[TgGhv7V1QGagb_PNDyXM-w, 'RemoteTransportException[[elk-es04][10.51.57.54:9300][internal:admin/repository/verify]]; nested: RepositoryVerificationException[[client_statistics] store location [/storage/esdata] is not accessible on the node [{elk-es04}{TgGhv7V1QGagb_PNDyXM-w}{7dxKWcF3QreKMZOKhTFgeg}{/10.10.88.89}{/10.10.88.89:9300}]]; nested: AccessDeniedException[/storage/esdata/tests-_u6h8XrJQ32dYPaC7zFC_w/data-TgGhv7V1QGagb_PNDyXM-w.dat];']]"},"status":500}

解决方法:

发现node1,node2,node3的es权限是500,node4的权限是501,最近比较背,感觉任何一个小问题都会遇到很多意想不到的地方,这不google了下https://discuss.elastic.co/t/why-does-creating-a-repository-fail/22697/16显然是权限问题,nfs这边有些策略,权衡利弊想短时间内解决问题

打算修改node4的es的uid和gid都为500,使其与前三个节点保持一致

https://blog.csdn.net/train006l/article/details/79007483

usermod -u 500 es

groupmod -g 500 es

注意usermod时需要es用户没有进程在活动,就是说elasticsearch进程需要关闭,重复上面的node,all操作,另外如果有其他用户占用了500 id,相应的修改且注意相关进程。

如果不放心也可手动修改,主要是/home/es下的权限及es数据权限

find / -user 501 -exec chown -h es {} \;

find / -group 501 -exec chown -h es {} \;

再次执行就不报错了:

[root@elk-es01 ~]# curl -XPUT http:///10.10.88.86:9200/_snapshot/client_statistics -d'

{

"type": "fs",

"settings": {

"location": "/storage/esdata",

"compress" : "true",

"max_snapshot_bytes_per_sec" : "50mb",

"max_restore_bytes_per_sec" : "50mb"

}

}'

{"acknowledged":true}[root@elk-es01 ~]#

查看配置

[root@elk-es01 ~]# curl -XGET http:///10.10.88.86:9200/_snapshot/client_statistics?pretty

{

"client_statistics" : {

"type" : "fs",

"settings" : {

"location" : "/storage/esdata",

"max_restore_bytes_per_sec" : "50mb",

"compress" : "true",

"max_snapshot_bytes_per_sec" : "50mb"

}

}

}

三、给需要迁移的索引做快照

注意索引数量多但是数据量不大时可以统配多一些index,保证每次迁移的数据量不至于太大,比如每次100G以内,防止网络等其他原因导致传输中断等

[root@elk-es01 ~]# curl -XPUT http://10.10.88.86:9200/_snapshot/client_statistics/logstash-nginx-accesslog-2018.05.11 -d'

{

"indices": "logstash-nginx-accesslog-2018.05.11"

}'

{"accepted":true}[root@elk-es01 ~]#

2018年5月份只是前10天

curl -XPUT http://10.10.88.86:9200/_snapshot/client_statistics/logstash-nginx-accesslog-2018.05 -d'

{

"indices": "logstash-nginx-accesslog-2018.05.0"

}'

curl -XPUT http://10.10.88.86:9200/_snapshot/client_statistics/logstash-nginx-accesslog-2018.04 -d'

{

"indices": "logstash-nginx-accesslog-2018.04"

}'

curl -XPUT http://10.10.88.86:9200/_snapshot/client_statistics/logstash-nginx-accesslog-2018.03 -d'

{

"indices": "logstash-nginx-accesslog-2018.03"

}'curl -XPUT http://10.10.88.86:9200/_snapshot/client_statistics/logstash-nginx-accesslog-2018.02 -d'

{

"indices": "logstash-nginx-accesslog-2018.02"

}'

curl -XPUT http://10.10.88.86:9200/_snapshot/client_statistics/logstash-nginx-accesslog-2018.01 -d'

{

"indices": "logstash-nginx-accesslog-2018.01"

}'

curl -XPUT http://10.10.88.86:9200/_snapshot/client_statistics/logstash-nginx-accesslog-2017.12 -d'

{

"indices": "logstash-nginx-accesslog-2017.12"

}'

curl -XPUT http://10.10.88.86:9200/_snapshot/client_statistics/logstash-nginx-accesslog-2017.11 -d'

{

"indices": "logstash-nginx-accesslog-2017.11*"

}'

例子

[root@elk-es01 ~]# curl -XPUT http://10.10.88.86:9200/_snapshot/client_statistics/logstash-nginx-accesslog-2017.11 -d'

{

"indices": "logstash-nginx-accesslog-2017.11*"

}'

{"accepted":true}[root@elk-es01 ~]#

[root@elk-es01 ~]# curl -XGET http://10.10.88.86:9200/_snapshot/client_statistics/logstash-nginx-accesslog-2017.11?pretty

{

"snapshots" : [

{

"snapshot" : "logstash-nginx-accesslog-2017.11",

"uuid" : "PwPlyCbQQliZY3saog45LA",

"version_id" : 5000299,

"version" : "5.0.2",

"indices" : [

"logstash-nginx-accesslog-2017.11.20",

"logstash-nginx-accesslog-2017.11.17",

"logstash-nginx-accesslog-2017.11.24",

"logstash-nginx-accesslog-2017.11.30",

"logstash-nginx-accesslog-2017.11.22",

"logstash-nginx-accesslog-2017.11.18",

"logstash-nginx-accesslog-2017.11.15",

"logstash-nginx-accesslog-2017.11.16",

"logstash-nginx-accesslog-2017.11.27",

"logstash-nginx-accesslog-2017.11.26",

"logstash-nginx-accesslog-2017.11.19",

"logstash-nginx-accesslog-2017.11.21",

"logstash-nginx-accesslog-2017.11.28",

"logstash-nginx-accesslog-2017.11.23",

"logstash-nginx-accesslog-2017.11.25",

"logstash-nginx-accesslog-2017.11.29"

],

"state" : "IN_PROGRESS",

"start_time" : "2018-05-14T02:31:58.900Z",

"start_time_in_millis" : 1526265118900,

"failures" : [ ],

"shards" : {

"total" : 0,

"failed" : 0,

"successful" : 0

}

}

]

}

注意state 在IN_PROGERESS,变成SUCCESS时快照完成,注意SUCCESS时再执行下一次快照,如果index比较少时也可以一次性执行,不分开。

在微软云elasticsearch集群上操作

四、迁移数据到微软云elasticsearch集群

1、挂载nfs服务端

yum -y install nfs-utils

mkdir -p /storage/esdata

mount -t nfs 192.168.88.20:/data/es-data01/backup /storage/esdata/

df -h

所有master节点与node节点都需要挂载nfs

scp阿里云的数据包到微软云nfs服务端,注意需要先tar包,后解压缩,scp前需要open***打通

scp test@10.10.88.89:/storage/esdata/es20180514.tar.gz ./

scp test@10.10.88.89:/storage/esdata/indices20180514.tar.gz ./

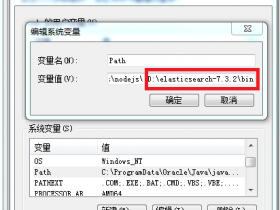

2、修改新集群配置

1)、修改elasticsearch.yml配置,添加如下

path.repo: /storage/esdata

#设置索引备份快照路径

注意所有的master节点与data节点都需要配置

2)、关闭自动平衡操作同上

curl -XPUT http://192.168.88.24:9200/_cluster/settings -d'

{

"transient" : {

"cluster.routing.allocation.enable" : "none"

}

}'

sleep 3

/etc/init.d/elasticsearch restart

curl -XPUT http://192.168.88.24:9200/_cluster/settings -d'

{

"transient" : {

"cluster.routing.allocation.enable" : "all"

}

}'

curl http://192.168.88.20:9200/_cluster/health?pretty

3、恢复快照

1)、先建数据仓库

curl -XPUT http://192.168.88.20:9200/_snapshot/client_statistics -d'

{

"type": "fs",

"settings": {

"location": "/storage/esdata",

"compress" : "true",

"max_snapshot_bytes_per_sec" : "50mb",

"max_restore_bytes_per_sec" : "50mb"

}

}'

2)、再恢复index

root@prod-elasticsearch-master-01 es]# curl -XPOSThttp://192.168.88.20:9200/_snapshot/client_statistics/logstash-nginx-accesslog-2018.05/_restore

{"accepted":true}

依次执行:注意只有当前任务执行完了,才能正确执行下一个任务,开始index状态是yellow,等都变成green就正常了

curl -XPOST http://192.168.88.20:9200/_snapshot/client_statistics/logstash-nginx-accesslog-2018.04/_restore

curl -XPOST http://192.168.88.20:9200/_snapshot/client_statistics/logstash-nginx-accesslog-2018.03/_restore

curl -XPOST http://192.168.88.20:9200/_snapshot/client_statistics/logstash-nginx-accesslog-2018.02/_restore

curl -XPOST http://192.168.88.20:9200/_snapshot/client_statistics/logstash-nginx-accesslog-2018.01/_restore

curl -XPOST http://192.168.88.20:9200/_snapshot/client_statistics/logstash-nginx-accesslog-2017.12/_restore

curl -XPOST http://192.168.88.20:9200/_snapshot/client_statistics/logstash-nginx-accesslog-2017.11/_restore

3)、最后检查状态

[root@prod-elasticsearch-master-01 es]# curl -XGEThttp://192.168.88.20:9200/_snapshot/client_statistics/logstash-nginx-accesslog-2018.05/_status?pretty

{

"snapshots" : [

{

"snapshot" : "logstash-nginx-accesslog-2018.05",

"repository" : "client_statistics",

"uuid" : "VL9LHHUKTNCHx-xsVJD_eA",

"state" : "SUCCESS",

"shards_stats" : {

"initializing" : 0,

"started" : 0,

"finalizing" : 0,

"done" : 45,

"failed" : 0,

"total" : 45

},

"stats" : {

"number_of_files" : 4278,

"processed_files" : 4278,

"total_size_in_bytes" : 22892376668,

"processed_size_in_bytes" : 22892376668,

"start_time_in_millis" : 1526280514416,

"time_in_millis" : 505655

},

"indices" : {

"logstash-nginx-accesslog-2018.05.08" : {

"shards_stats" : {

"initializing" : 0,

"started" : 0,

"finalizing" : 0,

"done" : 5,

"failed" : 0,

"total" : 5

},

"stats" : {

"number_of_files" : 524,

"processed_files" : 524,

"total_size_in_bytes" : 2617117488,

"processed_size_in_bytes" : 2617117488,

"start_time_in_millis" : 1526280514420,

"time_in_millis" : 260276

},

"shards" : {

"0" : {

"stage" : "DONE",

"stats" : {

"number_of_files" : 67,

"processed_files" : 67,

"total_size_in_bytes" : 569057817,

"processed_size_in_bytes" : 569057817,

"start_time_in_millis" : 1526280514420,

"time_in_millis" : 68086

}

},

"1" : {

"stage" : "DONE",

"stats" : {

"number_of_files" : 124,

"processed_files" : 124,

"total_size_in_bytes" : 499182013,

"processed_size_in_bytes" : 499182013,

"start_time_in_millis" : 1526280514446,

"time_in_millis" : 62925

}

},

"2" : {

"stage" : "DONE",

"stats" : {

"number_of_files" : 109,

"processed_files" : 109,

"total_size_in_bytes" : 478469125,

"processed_size_in_bytes" : 478469125,

"start_time_in_millis" : 1526280698072,

"time_in_millis" : 76624

}

},

"3" : {

"stage" : "DONE",

"stats" : {

"number_of_files" : 124,

"processed_files" : 124,

"total_size_in_bytes" : 546347244,

"processed_size_in_bytes" : 546347244,

"start_time_in_millis" : 1526280653094,

"time_in_millis" : 103590

}

},

"4" : {

"stage" : "DONE",

"stats" : {

"number_of_files" : 100,

"processed_files" : 100,

"total_size_in_bytes" : 524061289,

"processed_size_in_bytes" : 524061289,

"start_time_in_millis" : 1526280514456,

"time_in_millis" : 69113

}

}

}

},

"logstash-nginx-accesslog-2018.05.09" : {

"shards_stats" : {

"initializing" : 0,

"started" : 0,

"finalizing" : 0,

"done" : 5,

"failed" : 0,

"total" : 5

},

"stats" : {

"number_of_files" : 425,

"processed_files" : 425,

"total_size_in_bytes" : 2436583034,

"processed_size_in_bytes" : 2436583034,

"start_time_in_millis" : 1526280514425,

"time_in_millis" : 505646

},

"shards" : {

"0" : {

"stage" : "DONE",

"stats" : {

"number_of_files" : 94,

"processed_files" : 94,

"total_size_in_bytes" : 462380313,

"processed_size_in_bytes" : 462380313,

"start_time_in_millis" : 1526280971948,

"time_in_millis" : 48123

}

},

"1" : {

"stage" : "DONE",

"stats" : {

"number_of_files" : 103,

"processed_files" : 103,

"total_size_in_bytes" : 506505727,

"processed_size_in_bytes" : 506505727,

"start_time_in_millis" : 1526280851761,

"time_in_millis" : 69562

}

},

"2" : {

"stage" : "DONE",

"stats" : {

"number_of_files" : 73,

"processed_files" : 73,

"total_size_in_bytes" : 506830214,

"processed_size_in_bytes" : 506830214,

"start_time_in_millis" : 1526280514425,

"time_in_millis" : 60508

}

},

"3" : {

"stage" : "DONE",

"stats" : {

"number_of_files" : 52,

"processed_files" : 52,

"total_size_in_bytes" : 494390868,

"processed_size_in_bytes" : 494390868,

"start_time_in_millis" : 1526280593311,

"time_in_millis" : 52673

}

},

"4" : {

"stage" : "DONE",

"stats" : {

"number_of_files" : 103,

"processed_files" : 103,

"total_size_in_bytes" : 466475912,

"processed_size_in_bytes" : 466475912,

"start_time_in_millis" : 1526280583835,

"time_in_millis" : 64169

}

}

}

}

}

}

]

}

- 安卓客户端下载

- 微信扫一扫

-

- 微信公众号

- 微信公众号扫一扫

-